.NET developers are familiar with .NET’s own web development framework which is ASP.NET. The ASP.NET framework supports a magnitude of higher level web development frameworks such as Web Forms, Web Pages, MVC, Web API, SignalR. The ASP.NET framework was and currently is solid framework for developing modern web applications. However there were a considerable amound of reasons for which Microsoft decided to develop and introduce a new framework termed ASP.NET 5. Few of the key reasons for a new framework are,

- Open-source the code-base to gain community support and feedback

- Imporve performance of the traditional ASP.NET runtime

- Reach out to developers with frequent updates due to competing technologies

- Enavle cross-platform development/hosting oppertunities

- Support extensive command-line tooling

- Introduce simple project structure to quickly and easily create ASP.NET web applications

Introducing of ASP.NET 5

After 2+ years of effort, as of the 18th, November 2015, Microsoft officially released ASP.NET 5. ASP.NET 5 is a brand-new ground-up implementation influenced by the traditional ASP.NET framework. ASP.NET 5 is completely platform agnostic depending on the runtime you decide to use. You are free to utilize the full .NET framework that will enable running on windows only or the .NET Core framework which will enable cross-platform behaviour, and the choise of runtime is completely up to you. Hence the ASP.NET 5 RC1 version was built to run on top of .NET Execution Environment (DNX) and a couple of other supporting tools such as the .NET Version Manager (DNVM) and .NET Development Utilities (DNU). Below are the tasks handled by tools,

DNVM – .NET Version Manager DNVM acts as the version manager that will help you configure what version of the .NET runtime to use, by downloading the required versions of .NET runtime and by setting it at a mechine, process or user level so that your application can pick up the runtime during runtime.

DNX – .NET Execution Environment The DNX is to provide a consistent development and execution environment across multiple operating systems. It is responsible of hosting the CLR by handeling the dependecies and bootstrpping your applicaiton based on the configurations specified in the configuration file that is defined as part of the application.

DNU – .NET Development Utilities As the term suggests, DNU is a tool to support various tasks, such as managing libraries or packaging and publishing your application.

ASP.NET 5 Rebranded to ASP.NET Core

Upon the release of ASP.NET 5 which was an entirely new ground-up implementation, it caused somewhat of a misunderstanding that ASP.NET 5 is a newer version of the current ASP.NET framework and replaces the current version, which was not the case. Hence Micorosoft officially decided to rebranded the term ASP.NET 5 to ASP.NET Core, such that it clears the misunderstanding. This was communicated by Scott Hanselman on 19th, January 2016.

Limitations of ASP.NET Core RC1

ASP.NET 5 was much appriciated by the .NET development comunity. However ASP.NET 5 by design was developed more towards targetting web application development. ASP.NET 5 applications contained Startup.cs class within a class library. The DNX tool runs the ASP.NET hosting library and that would dynamically figure out the Startup.cs class and bootstrap the application.

During this time, Microsoft determined that it was also important to support native/cross-platform console applications. Due to this reason Microsoft had to revamp and introduce a toolchain that will seemlessly be ready for developing of both console and web applications.

With .NET RC2 and ASP.NET RC2 the .NET toolchain is one of the most significant changes that RC2 brings to ASP.NET Core as stated in the Visual Studio blog.

Introducing of ASP.NET Core RC2

As of the 16th, May 2016, Microsoft officially released .NET Core RC2 and ASP.NET Core RC2. The RC2 version of .NET Core and ASP.NET Core addresses the limitations encountered in the RC1 version. As of RC2 an ASP.NET application is bound to behave as a console applicaiton. The console application is responsible of calling on the ASP.NET Hosting libraries as opposed to the other way around that happened in RC1. Although the RC1 way of how things happened are still supported in RC2, RC2’s way provides more visibility and control to the appication developer on determining how the application works.

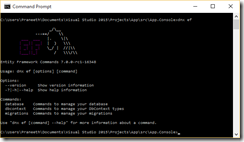

Going further ASP.NET Core RC2 makes things simpler by relying on a new toolchain called the .NET Command Line Interface (.NET CLI) that comes as part of .NET Core RC2. This tool replaces the old DNVM, DNX and DNU wich was part of the ASP.NET RC1 build. The .NET CLI will perform the tasks that each of the tools in RC1 was responsible, including easy construction, package management, and compilation of applications using the new .NET Core SDK.

Important Details

As confirmed by the Visual Studio blog and Scott Hunter, for the most part of these frameworks runtime/Libraries (CLR, libraries, compilers, etc.) for both .NET Core RC2 and ASP.NET Core RC2 will not change “much” by the time it RTMs which should be available by the end of June. This means we are free to develop go live applications with the RC2 verion of these frameworks .

However the tooling such as the .NET CLI and Visual Studio are still on preview. Microsoft has officially split the delivery of Visual Studio tools from the .NET Core and the ASP.NET Core runtime and libraries. As mentioned by Scott Hunter the tooling supports for .NET CLI and ASP.NET Core are still not at the level of RTM but should be by the end of June.

Summary

The intention of this post is to demystify the understating of .NET Core and ASP.NET Core and provide a breakdown of how related the two are in terms of eveolution of the frameworks. The content of this blog post is a compiled set of information that I have gathered from the various online blogs. I hope this gives you enough information on how ASP.NET is spanning on to reach highrounds and where the framework is heading towards and what how various components interact together. Please do let me know if there is anything I have failed to include or misinterpretted.

Happy Coding!